Written by

Reading time:

7 min

Minutes

You opened the test results this morning. Twelve ads shipped last week. Three are spending. Nine aren't. The three that worked had different hooks, different visuals, different angles, different audiences. The nine that didn't had all of that too. You can't tell which decision made the winners work.

You're not testing wrong. You're not testing at all. You're shipping variations and reading the leaderboard.

The gap between an ad Meta wants to spend on and an ad Meta buries isn't a design gap. It's not a font, a badge, a CTA. It's a brief gap. Someone made one specific decision about who the ad was for, before production started, and that decision is what Meta's algorithm is reading in the first 48 hours.

Most brands can't see this because their tests aren't built to surface it. They ship twelve ads that differ on four variables at once, wait a week, and try to reverse-engineer which decision the algorithm liked. They can't. The test wasn't built to answer that question.

This post is the framework that does.

Why most Meta creative tests teach you nothing

Most brands aren't running tests. They're running variation batches and calling them tests.

The pattern looks like this: brief twelve ads for the week, change something in each one, ship them all into the same campaign, wait seven days, look at CPA, declare a winner. The "winner" is the ad that spent the most without breaking the target. Everyone agrees it's the winner. Nobody can say why.

That isn't a test. A test isolates one variable. If ad A and ad B differ in hook, visual, angle, and audience, and ad A wins, you've learned nothing about what to brief next. You've learned that some combination of four decisions worked better than another combination of four decisions, in one week, under one set of auction conditions. That's not a signal. That's noise you can't act on.

The problem compounds with how Meta allocates spend. The algorithm shifts budget toward whatever gets early traction, which means by day three you're not even comparing the same conditions across your "test." Ad A got 70% of the budget. Ad B got 12%. The CPA gap you're reading isn't the gap between two creatives: it's the gap between two creatives running on completely different spend levels. Most "tests" end up being post-hoc rationalizations of what Meta already chose to favor in the first 48 hours.

The brands that compound on Meta in 2026 stopped running variation batches. They learned to read the account before they read the test.

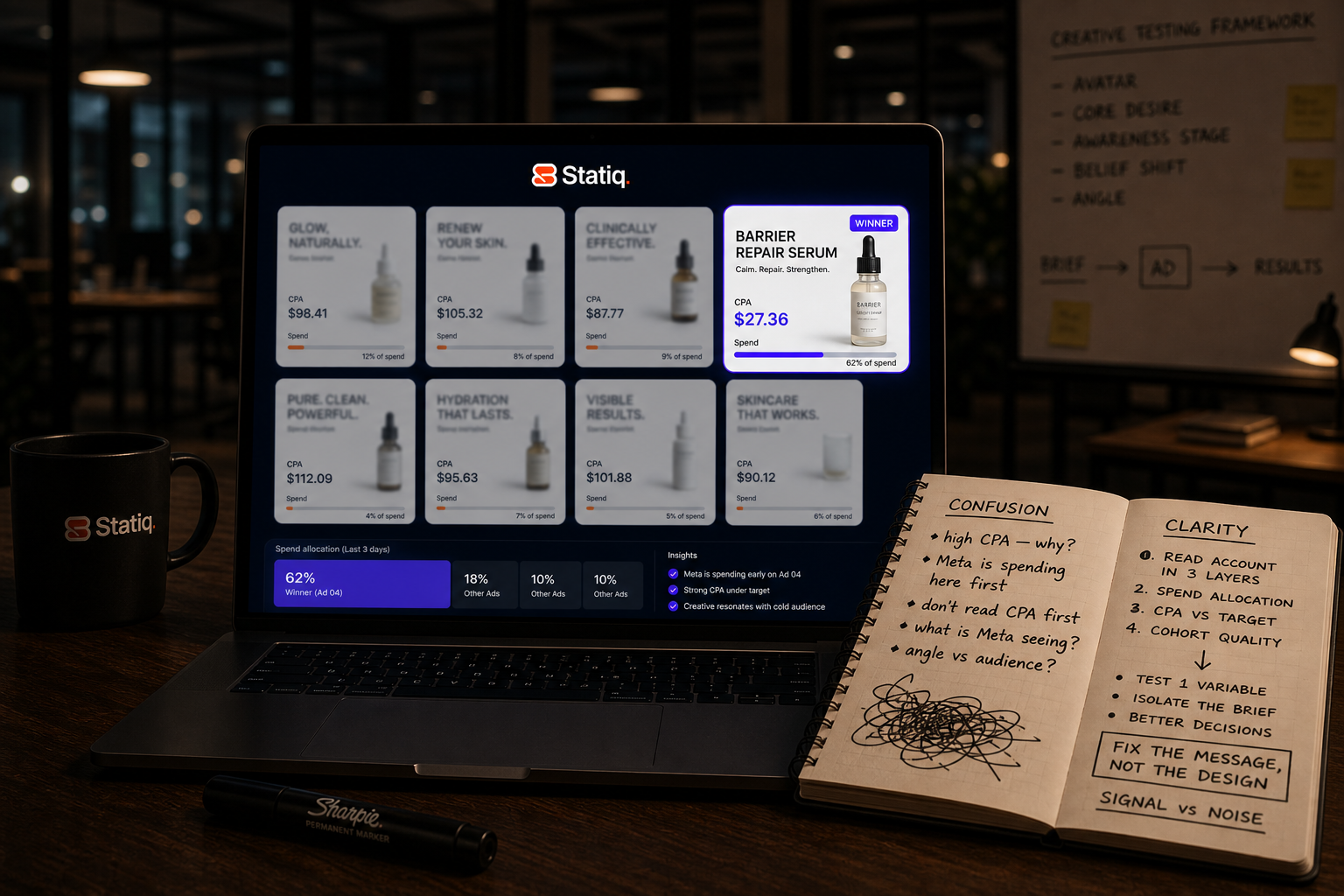

Read what Meta is telling you before you read CPA

Before you read a single performance metric, Meta has already told you which ad it thinks is going to work. The framework Statiq uses across the portfolio reads the account in three layers, in this order:

- Layer 1: Where Meta is allocating spend

Upload five ads into an ad set. Within 48 hours, the algorithm starts reallocating budget. By day three, one ad has consumed most of the budget while others have barely spent. Meta is running a real-time auction and telling you which creative has the highest probability of converting, before you've read a single result. Most brands skip this layer entirely. - Layer 2: CPA against your target

This is where most brands start. It's the right metric to optimize against, but it's the second question, not the first. If the top-spending ad is hitting your CPA target, scale it. If it's above target, the instinct is to kill it: don't. Meta already decided it wants to spend there. The problem is downstream: for a static, usually the headline or visual not delivering the message. One fix on an ad Meta already wants to spend on, and the same creative starts converting. - The hit rate benchmark

Statiq's benchmark across the portfolio is 15% of ads produced hitting CPA target. That number varies by category and spend level, but every account needs one. If hit rate drops while production stays flat, creative quality is slipping weeks before CPA reports tell you. - Layer 3: Cohort quality

The layer most brands never reach. We've scaled ads with on-target CPA where Meta kept pushing more spend, and a quarter later the customers those ads brought in had high churn and didn't match the ICP. In-platform: green. Business: bleeding.

To check: ask your customer service team what they think of the customers that came in last quarter. Are they staying? Are they who you actually want? By the time cohort quality shows up in revenue, you're already three months behind.

Layer 1 tells you what Meta thinks. Layer 2 tells you if it's profitable. Layer 3 tells you if it's sustainable. Most brands stop at two. The framework in the rest of this post assumes you're reading all three.

The variables worth isolating come from the brief, not the design

When most brands talk about "creative testing," they mean testing things they can change inside Figma: different fonts, different badge colors, bigger CTA, smaller CTA, background swapped from white to gradient, headline copy rewritten three ways.

None of those variables are going to move an ad from one Meta buries to one Meta scales. The gap between those two outcomes isn't a design gap. It's a brief gap.

Looking across 50+ accounts and 9,000+ ads a month, the structural difference between the top 5% of ads and the bottom 5% isn't visible in the design. It's visible in the brief that produced the design file. The top 5% all have a brief where the right questions were answered with specificity. The bottom 5% have a brief where one or more of the questions was skipped: usually awareness stage or belief shift, the two most often treated as optional.

The variables that actually move CPA get decided before production starts. They live in the brief, specifically in the five questions every brief should answer:

- Avatar

Who specifically is this ad for? Not the demographic. The person, the moment, the state of mind they're in when the ad lands. - Core desire

What does that person actually want? Usually a feeling, not a feature. - Awareness stage

Do they know they have the problem? Do they know what's causing it? Do they know solutions exist? The answer changes everything. - Belief shift

The one thing they need to stop believing, or start believing, before they'll buy. - Angle

The entry point. Where in their day does this ad find them, and what does it say first?

The five-question brief is the foundation of what we call performance design: the discipline that determines whether an ad has a chance of working before anyone opens a design file.

Testing means changing one of those five answers and keeping the other four fixed. If you're testing avatar, the other stages and the angle stay the same; and you ship three to five concept variations that speak to a different avatar. Now when one wins, you know what won. You know what to brief next.

The design variables: font, badge, CTA copy, button placement, still get tested. But they get tested after the brief variable wins, on the ad that already proved the brief works. That's where design optimization belongs. Not at the start of the funnel of decisions, at the end of it.

How to structure a week of testing at $50K–$500K/month spend

A useful test has a specific shape. Five elements:

1. One isolated brief variable

Pick which of the five questions you're testing: avatar, core desire, awareness stage, belief shift, or angle. The other four stay fixed across every concept in the test.

2. Three to five concept variations

Enough to give the variable a real read. Fewer than three and you can't separate signal from noise. More than five and you're back to volume shipping.

3. A hypothesis written before launch

One sentence is enough. Example: "We expect the new avatar, skeptical first-time buyer, to outperform the current avatar, returning customer, on cold traffic, because the angle bypasses the price objection that's blocking conversion." Without this, you're reading data after the fact and deciding what it means. That isn't testing. That's storytelling.

4. A minimum spend threshold per ad

Somewhere between $200 and $500 depending on your CPA target. Below that, you don't have enough impressions to mean anything. Killing ads before they clear the threshold is how brands kill winners that would have compounded.

5. A single campaign, not three

Ship the variations into one campaign so they're competing in the same auction. Splitting them across campaigns means Meta's algorithm is making different allocation decisions in each one, and Layer 1 stops telling you anything useful.

Once the test is live, every ad is in one of three states:

- Below the spend threshold: incomplete, leave it alone.

- Above the threshold and 30%+ over CPA target: a loser, kill it.

- Above the threshold and under CPA target: a winner, scale it.

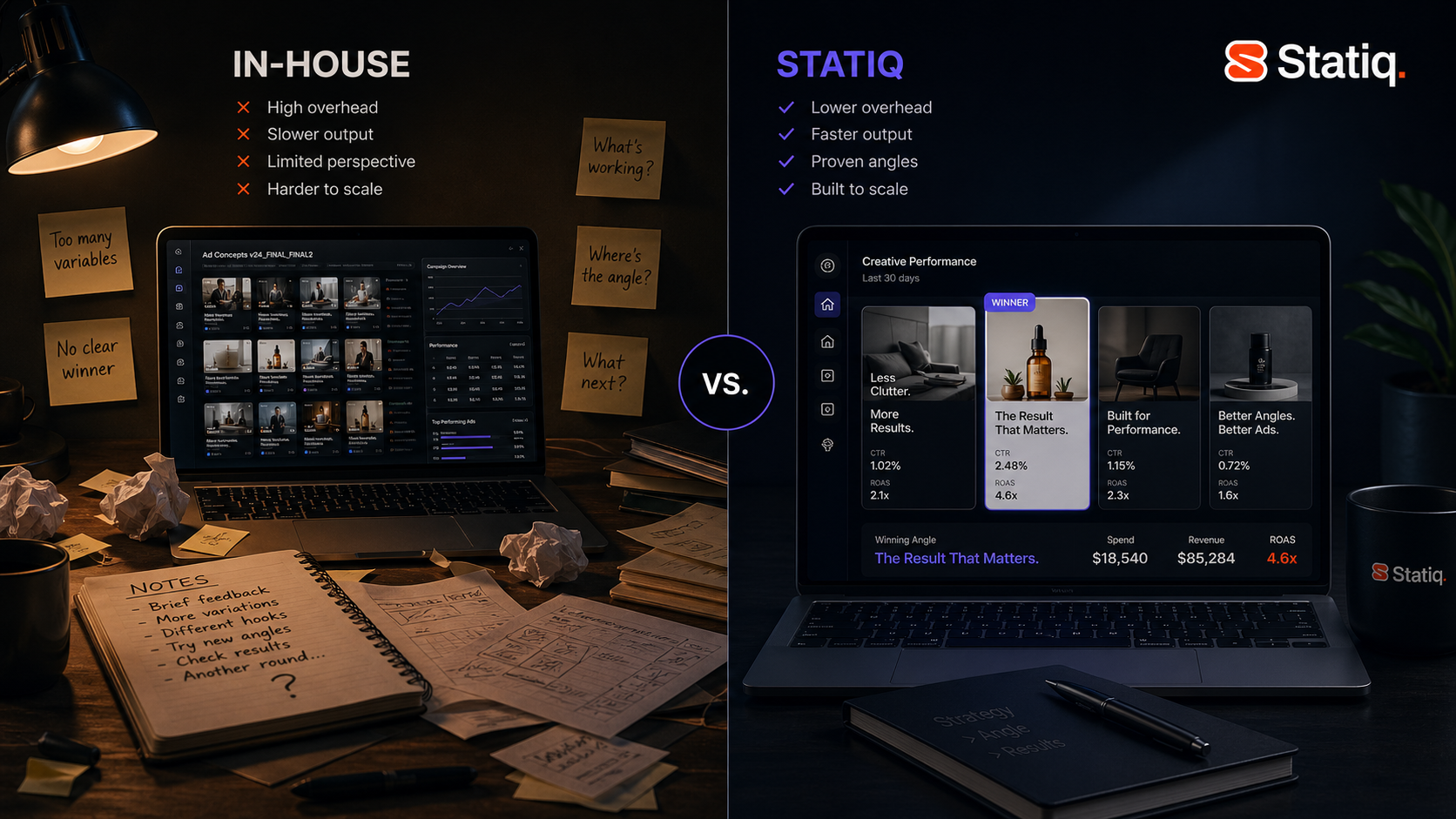

That's the framework. The harder problem is volume.

Most brands break it on volume. They ship 100 ads a week because someone on X said volume is the strategy. It isn't. Looking across 50+ accounts spending millions a month on Meta, the pattern is consistent: when brands cut from ~100 ads a week to ~50 ads built on sharper briefs, hit rate roughly doubles. Same number of winners, half the testing budget burned, twice the signal coming back.

The math is straightforward. At $200 per ad in testing spend, 100 ads is $20K a week. If 10% hit target, you burned $18K to find 10 winners. Cut to 50 ads on better briefs, hit rate goes back to 20%, and you find the same 10 winners with $10K left in the budget. The ads that hit also scale harder: a well-briefed concept doesn't just show up in the winners more often, it spends more when it gets there. If the volume model is already breaking your team, here's what the structural problem looks like: and how to fix it without adding headcount.

Applying this framework to an account already running ads

Most VPs of Growth reading this don't have the option to start clean. The account already has hundreds of live ads, three quarters of campaign history, and a creative team mid-sprint. The framework has to apply to the account you already have.

The diagnostic Statiq runs on every new client account starts with the same move: pull the 10 highest-spending ads from the last 90 days.

Then map each one against the five questions:

- Avatar: Who was this ad implicitly for?

- Core desire: What did it promise?

- Awareness stage: Where in the funnel did it enter?

- Belief shift: What did it need the viewer to stop or start believing?

- Angle: What was the entry point?

What you'll find every time: the 10 top-spending ads cluster around two or three answers per question. Same avatar, repeated. Same awareness stage, repeated. Same angle, repeated with minor variations. The account isn't testing five variables: it's been hammering the same two or three combinations for a quarter and calling that a creative strategy.

The gaps are the test list. Every answer to every question that isn't represented in the top 10 is a hypothesis you haven't tested. A different avatar. A different awareness stage. A different belief shift. Each one is a brief variable to isolate, three to five concepts deep, run through the same structure: one isolated variable, three to five concepts, hypothesis before launch.

If the top 10 ads are clustering tight, you don't need more ads. You need different briefs. Here's what the data from 9,000+ ads shows about what actually makes them work.

Three signals your testing isn't teaching you anything

If any of these sound familiar, the problem isn't the ads. It's the system producing them.

- Your test reports compare ads that differ in more than one variable

Pull up the last test you ran. If the winning ad and the losing ad differ in hook, visual, angle, and audience, you didn't run a test. You ran a variation batch. The win tells you nothing about which decision drove the result, and the next round of briefs has nothing to act on. - No hypothesis was written before launch

Check the documentation from your last testing cycle. If there's no one-sentence statement of what you expected to happen and why, the test was never set up to teach you anything. You're reading the data after the fact and deciding what it means. - Your top 10 highest-spending ads cluster around the same two or three brief answers

Same avatar, same awareness stage, same angle, with minor variations across hook and visual. The account looks busy because it's shipping volume, but the brief hasn't moved in six months. The next net-new winner is in the gaps, and you don't have a system pointing you toward them.

If any of that sounds familiar, the problem has a fix. It starts with the brief. Let's talk!

Because most tests change multiple variables at once. When the winning ad differs from the losing ad in hook, visual, angle, and audience, the result can't be traced back to a single decision. The next round of briefs has nothing to act on.

How many ads should a DTC brand test per week on Meta?

Across 50+ accounts, Statiq's data shows hit rate roughly doubles when brands cut from ~100 ads a week to ~50 ads built on sharper briefs. Same number of winners, half the testing budget burned. The number to track isn't ads shipped: it's spend on ads that didn't work.

What's the difference between testing the design and testing the brief?

Testing the design means changing fonts, badges, CTAs, and layouts. None of those variables determine whether Meta will spend on the ad. Testing the brief means changing one of the five upstream decisions: avatar, desire, awareness stage, belief shift, angle, that determine whether the ad has a chance of working before production starts.

When should a winning Meta ad be scaled?

Only after it has cleared the minimum spend threshold and is sitting under your CPA target. An ad above threshold but significantly over CPA target is a loser: kill it. An ad below threshold is incomplete, leave it alone. Scaling before an ad clears the threshold kills winners that would have compounded.

.avif)